Interaction Models: How it tries to address LLM's Walkie-Talkie problem

Have you ever used the voice mode in ChatGPT or Claude? If so, you've probably noticed how it stops the moment it thinks you're about to speak, holds an awkward beat before replying, and then talks at you in one uninterruptible monologue until it's done. It works, and it can feel almost magical the first time. But it isn't how human beings actually talk to one another.

When two people are deep in conversation, they talk over each other. They start a sentence, pause halfway, get interrupted, agree mid-thought with a quick "yeah, yeah," and keep going. A third person jumps in, the first one nods, the second one finishes their point anyway, and somehow nobody loses the thread. Speech, gesture, expression, and silence are all happening at once, in both directions, all the time. That is what real-time collaboration sounds like — and for very good technical reasons, no large language model on the market today can actually do it.

What if there were another way? What if, instead of taking turns through a microphone, you could talk to a model the way you talk to a colleague sitting next to you — one that listens while it speaks, sees while it listens, and reacts in the same continuous flow of time that you live in?

There is. And it has a name: an interaction model. On May 12, 2026, Mira Murati's Thinking Machines Lab published a research preview of exactly this idea, and it represents what may be the biggest rethink of how humans and AI talk to each other since ChatGPT first shipped voice mode. To understand why it matters, we first have to understand what's actually happening under the hood when you talk to today's voice assistants — and why it feels just a little bit like talking to someone over a walkie-talkie.

The Walkie-Talkie Problem: How Today's Voice Mode Actually Works

When you open voice mode in ChatGPT, Claude (Opus), or Gemini Live, you are not really talking to a model that hears you. You are talking to an assembly line. Engineers call this a cascaded architecture, and the metaphor is almost literal: your voice falls down a waterfall of separate components, each one doing a single, narrow job before passing the result to the next.

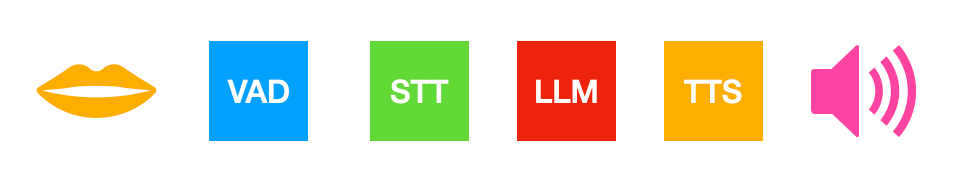

The pipeline usually looks like this. First, a voice-activity detector (VAD) listens to your microphone and tries to figure out when you have stopped talking. Then a speech-to-text model transcribes what you said into written words. Those words are handed to a large language model, which writes a reply in text. Finally, a text-to-speech model turns the reply back into a human-sounding voice and plays it through your speakers. Four different models, four different teams that built them, four separate moments where latency, errors, and lost nuance can creep in.

This is the walkie-talkie. You press the button by speaking. You release the button by going quiet. The model can only respond once it is sure you have let go. And while it is talking, it is essentially holding down its own button — it cannot hear you, cannot see you, cannot react to a confused look on your face or a quick "wait, no" you blurt out. It is locked into a strict alternation: input, output, input, output. Computer scientists call this turn-based interaction. Thinking Machines, in their announcement, called it the collaboration bottleneck.

More recent systems like OpenAI's GPT-Realtime have collapsed some of those steps into a single speech-to-speech model — the audio in, audio out parts are now one network. That helps with latency and preserves some tone and emotion. But the underlying choreography is still turn-based. Somewhere in the stack, a voice-activity detector still has to guess when your turn ends, and the model still produces one whole reply before it can hear you again. The pipeline got shorter; the conversational dance did not change.

What an Interaction Model Actually Is

An interaction model is what you get when you stop bolting interactivity onto a language model from the outside and instead bake it into the model itself, from the very first day of training. Thinking Machines describes it this way: "models that handle interaction natively rather than through external scaffolding." Instead of an LLM wrapped in a costume of helper components, the model treats listening, watching, thinking, and speaking as four things it does at the same time, the way you do.

Here is the simplest metaphor we can offer. A turn-based voice assistant is two people sending each other letters very, very fast. A speech-to-speech model is two people on a phone call where only one person can hold the receiver at a time. An interaction model is two people sitting across a table from each other — both can see, both can hear, both can react, both can interrupt, both can nod or wince or jump in mid-sentence to say "actually, I meant the blue one." Nothing about the conversation has to wait for a turn to formally end, because there are no turns in the first place. There is only time, flowing forward, with information moving in both directions continuously.

Under the Hood: Micro-Turns, Early Fusion, and a Background Brain

So how do you actually build something like this? Thinking Machines's preview model, called TML-Interaction-Small, leans on three architectural ideas that are worth understanding in plain language. The model itself is a 276-billion-parameter mixture-of-experts network with about 12 billion parameters active at any given moment — large by any measure, but the cleverness is in the choreography, not just the size.

The first idea is time-aligned micro-turns. Instead of consuming a whole user utterance and then producing a whole reply, the model slices time into tiny 200-millisecond slivers — roughly the width of a single syllable. In every slice, it does a little listening and a little speaking. Imagine a jazz duet where each musician plays one note, listens for one note, plays another, listens again, all in such fast alternation that to a human ear it sounds like they are playing simultaneously. That is the trick. The model is technically still taking turns, but the turns are so small and so tightly interleaved that the experience is indistinguishable from being present together.

Crucially, silence and overlap are now part of the data the model sees. In a normal LLM transcript, a long pause and a short pause look identical — they are just gaps between tokens. In an interaction model, every 200ms slice exists, even if no one is talking in it. That means the model knows the difference between "you stopped to think" and "you finished your sentence." It can hear you trail off, hear you take a breath, hear you start to say something while it is still speaking. The voice-activity detector, the little component that used to live outside the model and guess at turn boundaries, is gone. Turn-taking is now something the model itself learns, the same way a person learns it: by paying attention.

The second idea is encoder-free early fusion. In most existing multimodal systems, audio is first chewed up by a big specialized encoder (something Whisper-like), images get pushed through their own vision encoder, and only then do their squeezed-down representations meet the language model in the middle. The interaction model skips most of that. Audio comes in as a lightweight representation called dMel and is embedded by a tiny layer. Video frames are split into 40x40 pixel patches and passed through a small projector. Everything — text, audio chunks, image patches — lands inside the same transformer almost raw, mixed together from the start. The metaphor here is taste: rather than separately cooking three ingredients and stirring them at the end, you simmer everything in the same pot from the beginning. The flavors blend in ways they cannot blend if you keep them apart.

The third idea is a two-brain system: a fast foreground interaction model paired with a slower, smarter background model. The foreground brain is the one that keeps the conversation alive — it listens, watches, talks, nods along, never goes silent for more than a heartbeat. When a question arrives that genuinely needs deep thought, web search, or a long tool call, the foreground hands a rich context package (not just a query, but the whole running conversation) over to the background model, which thinks at its own pace. Results stream back in pieces, and the foreground weaves them into the conversation at a moment that feels natural — when there is a pause, when you ask a follow-up, when the topic comes back around. Think of it as a quick-witted friend who keeps chatting with you at a dinner party while quietly texting an expert under the table for the answer to a hard question, then naturally working that answer into the conversation when the moment is right.

There is also a layer of unglamorous but essential infrastructure work that makes the whole thing tractable: streaming sessions that let 200ms chunks be appended to a persistent sequence in GPU memory rather than re-prefilled from scratch every time, custom kernels designed for very small, very frequent matrix operations, and a level of "trainer-sampler alignment" precise enough that the model behaves identically in training and in production. None of these is glamorous, but together they are the difference between a beautiful research idea and something that can actually answer you in under half a second.

What This Unlocks That Voice Mode Cannot Do

The capabilities that fall out of this architecture are the part that makes the demo videos feel almost uncanny. The model can do live translation, speaking in English while you continue speaking in Spanish, with neither of you waiting for the other. It can commentate a sports game in real time, watching what happens on screen and narrating it as it unfolds. It can count your pushups out loud, watching the video stream and saying "one, two, three" at the right beats — a task that sounds trivial until you realize no commercial voice API today can do it, because none of them can decide to speak based on something they see rather than something they hear. It can be asked "correct my pronunciation as I read this passage" and actually do it in the moment, interjecting only when it hears a mistake, rather than waiting politely for you to finish the page.

It also gets time. Ask it to remind you to breathe in and out every four seconds, and it will actually do that, not because a calendar widget is firing off events, but because the model has a direct sense of elapsed time built into its perception of the world. Ask it how long it took you to run a mile, or how long it took you to write that function, and it can answer. These are not features that were added; they are side effects of the model living in continuous time rather than in a stack of discrete prompts.

On benchmarks, the picture is what you would expect: TML-Interaction-Small sits at the frontier of interactivity quality on FD-bench v1.5, with a turn-taking latency of around 0.4 seconds — roughly three times faster than GPT-Realtime-2 in its fastest mode — while remaining competitive on raw intelligence measures like Audio MultiChallenge against non-thinking models. The headline is not that it is the smartest model in the world; it is that, for the first time, you do not have to choose between a model that thinks and a model that listens like a person.

Why This Is a Different Bet Than OpenAI and Anthropic Are Making

It is worth being honest about the philosophical bet underneath all of this. The dominant story in AI for the past two years has been autonomy: agents that go off on their own, run for hours, and come back with finished work. That is what Claude Code does, what ChatGPT's agent mode aims at, what most of the frontier labs are racing toward. Thinking Machines is making a different argument. They are saying that for most real work, the human has to stay in the loop — clarifying, correcting, redirecting — and that today's interfaces literally do not have room for the human to do that. The model is too slow to interrupt, too rigid to listen, too blind to see. So humans get squeezed out, not because the work does not need them, but because there is no door for them to walk back through.

An interaction model is, at heart, the door. It is an architectural commitment to the idea that bandwidth between humans and AI matters as much as raw intelligence does — that the channel through which knowledge, intent, and judgment flow between you and the model is itself a thing that can be designed, scaled, and trained, not just a UI problem to paper over with prettier animations. If they are right, the next decade of AI products will not look like smarter chatbots; they will look like collaborators you can talk over, point at, gesture toward, and be interrupted by.

The Caveats Worth Keeping in Mind

A few honest caveats are worth saying out loud. This is a research preview, not a product you can use today. Thinking Machines is opening a limited preview in the coming months and a wider release later in 2026. Very long sessions still strain the streaming-session design because audio and video accumulate context quickly. The whole experience falls apart on a bad network connection, since 200ms latency budgets do not survive packet loss. And the current model, at 12B active parameters, is not the most intelligent thing in the world — the team is honest that their bigger pretrained models are currently too slow to serve at this latency, and that scaling up while staying real-time is the open work ahead.

There are real safety questions too. A model that can speak whenever it likes is a model that has to learn when not to. A model that watches you continuously is a model whose data privacy story has to be airtight. A real-time interface stresses alignment in ways a turn-based one does not, because the model is constantly choosing whether and when to act, with no human-imposed pause to vet what it produces. Thinking Machines has done early work on modality-appropriate refusals and long-horizon robustness, but this is a research frontier, not a solved problem.

The Takeaway

If you remember only one thing from this piece, let it be this. The voice modes you use today are an LLM standing behind a microphone, taking turns the way it has always taken turns in a chat window — just with a costume of speech recognition and synthesis bolted on. An interaction model dissolves that costume. It treats time, sound, sight, and speech as one continuous river that the model lives inside, rather than a sequence of separate buckets it dips into one at a time. Whether or not Thinking Machines is the lab that wins this race, the direction they are pointing in is almost certainly the direction the whole field has to go. The walkie-talkie was always a workaround. We were just waiting for someone to build the table.